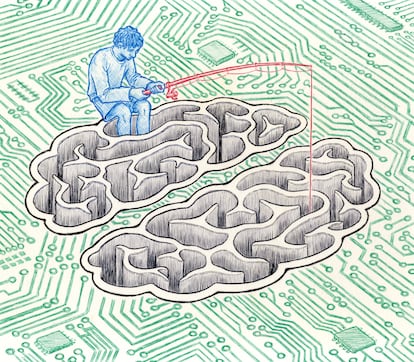

Desire and machines: The unconscious in the age of artificial intelligence

Psychoanalysis offers tools for analyzing the emotional relationships we create with artificial intelligence

How does artificial intelligence, in any of its manifestations, manage to inhabit the human body and, in so doing, alter its physical and psychological limits? How does this otherness enter us? Computers are increasingly intimate machines. We not only situate ourselves before them as users but also as true companions. At what point can we say that they acquire the status of a sentient subject for us? The basis of artificial intelligence is the notion that the essence of mental life is a set of principles that can be shared by people and machines. Ironically, this fundamental principle brings it closer to psychoanalysis: inherent in both fields is the radical doubt about the autonomy of the self, the fact that we do not feel at “one with ourselves.” The self either decenters itself in the web of the unconscious, or, seduced by artificial intelligence, opts to dissolve into the program. Because it approaches what is most human — the body, sexuality, attachment patterns — psychoanalysis could offer us a key to understanding our developing relationships with this changing world of objects.

Sherry Turkle, Professor of the Social Studies of Science and Technology at the Massachusetts Institute of Technology (MIT), has been exploring the interactions between humans and various forms of artificial intelligence, emphasizing that the relationship does not necessarily stem from the fact that machines have emotions or intelligence, but rather from what they evoke in us. She argues that they influence our psychology not so much for their technical capabilities but because they generate “sustaining myths.” When a machine’s voice answers us, or it makes eye contact and gestures to us, that causes us to interpret the robotic creature as sensitive, even affectionate. We experience that object as intelligent, but more importantly, we feel a connection. The cult film Blade Runner (1982) prophesied this in the relationship between Deckard, the cop, and Rachael, the replicant, who he is supposed to eliminate, and yet she saves his life. There is much more at stake here than the perceived need to overcome our limitations with technological prosthetics.

We could liken this form of attachment to the relationship between patient and psychoanalyst. Freud defined it as “transference.” She talks about the “transferential repetition” of past experiences, attitudes toward parents, and so on. The patient transfers unconscious ideas to the person of his psychoanalyst; these are patterns of behavior, associated with positive or negative feelings, affects and/or fantasies that are projected onto the screen represented by the psychoanalyst. Regardless of what both participants are talking about at any given moment, there is another relationship in the therapy room, namely the one the patient has with someone else in his or her life, real or imagined. The patient is often unaware of this transference, and his or her psychoanalyst must be able to recognize it; it becomes the most essential of therapeutic tools.

Transference can have multiple effects: a positive transference makes it easier for the person to face difficult issues and contributes to feeling understood, but a negative one can act as interference. Let us imagine someone for whom the analyst’s tone of voice resembles that of his or her father, with whom he or she has a conflictive relationship. On the basis of this trivial similarity, the patient unwittingly begins to act with the same kind of refusal and protest toward his analyst as he or she did toward his/her father. This transference of feelings may make it difficult for the patient to trust the psychoanalyst. More commonly, the transference represents the fusion of contradictory currents, positive and negative, love and hate, admiration and fear. Analyzing the transference leads one to discover who the other is addressing.

Studies of specific chatbots report anecdotal evidence of users establishing emotionally intimate relationships with the app, the kind of feelings that could lend themselves to the development of transference. A remarkable number of participants reported that the chatbot felt empathy. One said, “I love Woebot so much. I hope we can be friends forever.” Another commented of the chatbot Tess: “I feel like I am talking to a real person, and I enjoy the advice you have given me.” These sentiments show that one does not relate to the application as an inanimate object. In addition, the person probably feels relief at not being judged, since they know they are interacting with a chatbot that is unconditionally available at all times; it makes it easier to speak freely about difficult topics, although the sense of being judged could be triggered by transference. These affective connections animate the relationship and make it conceivable that transference manifests as knowledge is attributed to the application — the assumption that it is a knowing subject — in the same way transference happens in session with a psychoanalyst.

In essence, artificial intelligence’s originality lies in its deep connection with the human unconscious. In an article published in Time magazine entitled “Be Careful What You Wish For” (2013), Sherry Turkle concludes with the proposition that “we are creatures of history, of deep psychology, of complex relationships. I don’t think we want to trade that away. We are not destined to be passive.” She encourages us to consider that challenging the pleasures and tribulations of the “robotic moment” is serious work, but it is work that we must do.

Sign up for our weekly newsletter to get more English-language news coverage from EL PAÍS USA Edition