Decoder reads thoughts recorded by a brain scanner

Researchers have used the artificial intelligence system GPT to capture the meaning of sentences, instead of literal words

Three subjects were made to listen to a podcast from the New York Times and monologues from Moth Radio Hour program while having their brains scanned. U.S. researchers then used a decoder they had designed to convert the brain scans not only into complete sentences, but into texts that closely reproduced what the subjects had heard. According to the results, published Monday in the scientific journal Nature Neuroscience, this so-called “semantic” decoder was also able to verbalize what the subjects were thinking and, what’s more, it could even understand what was going through their heads while they were watching a silent movie.

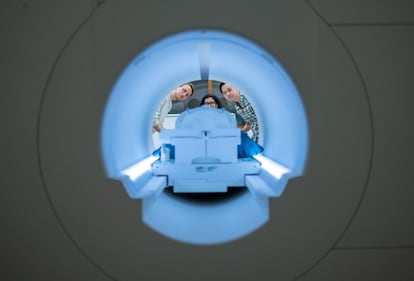

Since the beginning of the century, and particularly in the last decade, great advances have been made in the design of brain-computer interfaces (BCIs). Most research has been geared towards improving communication for people who are unable to speak or move their muscles. But most of these systems involve opening up a person’s skull and placing an array of electrodes directly into the brain. Another less invasive approach relies on functional magnetic resonance imaging (fMRI). Here, the interface is not in the brain, but in a cap filled with electrodes that is placed on the head. This cap does not record direct neuronal activity, but rather the changes in the level of oxygen in the blood that neural activity causes. This, however, poses challenges when it comes to the resolution of the brain images. Firstly, because the interface is on the outside, and secondly, because changes at that level occur at intervals of up to 10 seconds — a period in which many words can be said.

To solve these problems, a group of researchers from the University of Texas in the United States have turned to the artificial intelligence system GPT — the same on which the popular ChatGPT bot is based. This language model, developed by the OpenAI artificial intelligence lab, uses deep learning to generate text. In this investigation, researchers trained GPT with fMRI images of the brains of three people who were played 16 hours of audio from the New York Times podcast and the Moth Radio Hour program. In this way, they were able to match what they saw with the representation in their heads. The idea being that when the system heard other text again, it could anticipate what it was based on the patterns of what it had already learned.

“This is the original GPT, not like the new one [ChatGPT is supported by the latest version of GPT, version 4]. We collected a ton of data and then built this model, which predicts brain responses to stories,” Alexander Huth, a neuroscientist at the University of Texas, said in a webcast last week. With this method, the decoder proposes sequences of words “and for each of those words that we think might come next, we can measure how well that new sequence sounds and, in the end, see if it matches the brain activity that we observe,” explained Huth.

This decoder has been called semantic, and rightly so. Previous interfaces recorded brain activity in motor areas that control the mechanical basis of speech, that is, the movements of the mouth, larynx and tongue. “What they can decode is how the person is trying to move their mouth to say something. Our system works on a very different level. Instead of looking at the low-level motor domain, it works at the level of ideas, of semantics, of meaning. That is why it does not record the exact words that someone heard or pronounced, but rather their meaning,” said Huth. The resonances recorded the activity of various brain areas, but to achieve this feat, researchers focused more on those related to hearing and language.

Once the model was trained, the scientists tested it with half a dozen people who had to listen to different texts to the ones used to train the system. The machine decoded the fMRI images and closely approximated the stories that were told. To confirm that the device was operating on the semantic level and not the low-level motor domain, the scientists repeated the experiments, but this time asked the participants to imagine a story in their mind and then write it down. The researchers found there was a close correspondence between what was decoded by the machine and what was written by the participants. In a third round of experiments, the scientists went further and had subjects watch scenes from silent movies. Although in this case, the semantic decoder failed more with specific words, it still captured the meaning of the scenes.

Neuroscientist Christian Herff leads research into brain-computer interfaces at the University of Maastricht in the Netherlands, and almost a decade ago he created an ICB that allowed brain waves to be converted into text, letter by letter. Herff, who did not participate in the study, says he was impressed by the incorporation of the GPT language predictor. “This is really amazing, since the GPT inputs contain the semantics of speech, not the articulatory or acoustic properties, as was done in previous ICBs,” he said. “They show that the model trained on what is heard can decode the semantics of silent films and also in imagined speech.” Herff added that he is “absolutely convinced that semantic information will be used in brain-machine interfaces for speech in the future.”

Arnau Espinosa, a neurotechnologist at the Wyss Center Foundation in Switzerland, published a paper last year on an ICB that allowed an ALS patient to communicate, following a completely different approach. With respect to the new research from the University of Texas, Espinosa said: “Its results are not applicable today to a patient, you need magnetic resonance equipment that is worth millions and occupies a hospital room; but what they have achieved has not been achieved by anyone before.” The interface in Espinosa’s study was different. “We were going for a signal with less spatial resolution, but a lot of temporal resolution. We were able to know in each microsecond which neurons were activated and then we were able to go to phonemes and determine how to create a word,” he added. For Espinosa, in the future, ICBs will need to combine several systems and take in different signals. “Theoretically, it would be possible; much more complicated, but possible.”

Rafael Yuste, a Spanish neurobiologist at Columbia University in New York, has been warning about the dangers posed by advances in his discipline for some time. “This research and the Facebook study [on a mind-reading device] demonstrate the possibility of decoding speech using non-invasive neurotechnology. It is no longer science fiction,” he said in an email. “These methods will have huge scientific, clinical and commercial applications, but, at the same time, they highlight the possibility of deciphering mental processes, since inner speech is often used to think. This is one more argument for why mental privacy must be urgently protected as a fundamental human right.”

Anticipating these fears, the researchers from the University of Texas wanted to see if they could use their system to read the minds of other subjects. Fortunately, they found that the model trained with one person was not able to decipher what another person heard or saw. To be sure, they ran one last series of tests. This time they asked the participants to count by sevens, think and name animals and make up a story in their head while listening to the podcasts. In this experiment, the GPT-based interface — despite all the technology that goes into an MRI machine and the data handled by the AI system — failed miserably. For the researchers, this shows that cooperation is needed to read a person’s mind. But they also warn that their research was based on the patterns of half a dozen people. Perhaps with the data of tens or hundreds of people, they admit, there could be a real danger.

Sign up for our weekly newsletter to get more English-language news coverage from EL PAÍS USA Edition